UAE researchers release open-source medical AI that thinks like a real doctor

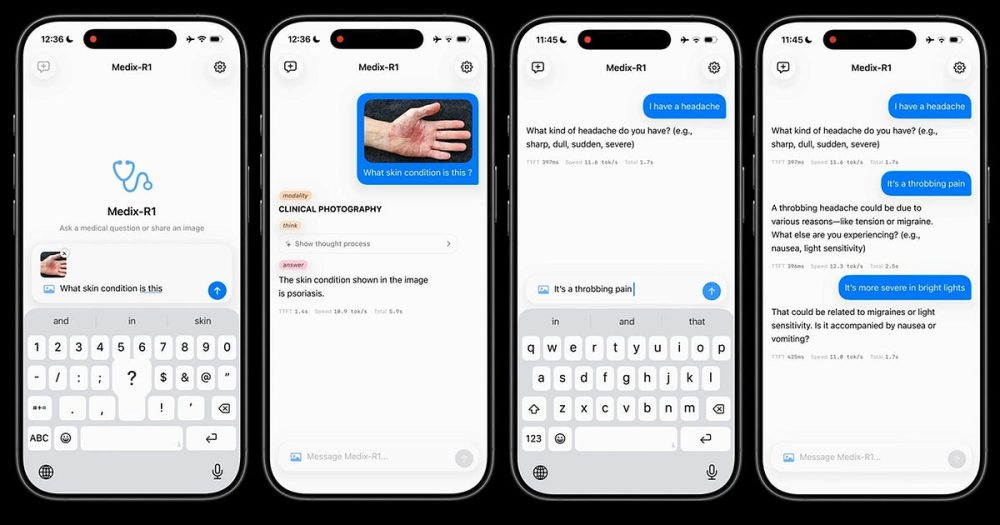

Researchers at Abu Dhabi's Mohamed bin Zayed University of Artificial Intelligence have created something different in medical AI. Their new model, MediX-R1, doesn't just pick from multiple choice answers. It provides open-ended clinical reasoning, much like a human doctor would.

The breakthrough comes from using reinforcement learning to train the AI on free-form responses rather than the tick-box formats that dominate medical AI today. Working with medical doctors from India, the MBZUAI team has produced a model that scored 95.1% on the US Medical Licensing Examination and earned doctor preference in 72.7% of blind reviews.

What makes this particularly impressive is efficiency. The model was trained on just 51,000 instruction examples - a fraction of what most medical AI systems require. Yet it works across 16 different medical imaging types, from X-rays to MRI scans to microscopy images.

How does it work?

MediX-R1 uses a composite reward framework that combines four different signals during training. This includes an accuracy measure, a semantic reward based on medical knowledge, a format reward, and a modality recognition component. The system prevents the AI from gaming the rewards while keeping training stable.

The model comes in three sizes: 2 billion, 8 billion, and 30 billion parameters. The smallest version can run on a mobile device without internet connectivity. The 8 billion parameter variant outperforms Google's MedGemma-27B despite being three times smaller.

Rather than requiring massive datasets of human-annotated clinical reasoning, the reinforcement learning approach lets the model learn from a much smaller set of examples. This dramatically reduces the cost and complexity of training medical AI systems.

Why does it matter?

Most medical AI models excel at multiple choice questions but struggle with the open-ended reasoning that real clinical practice demands. A doctor examining an X-ray doesn't pick from preset options - they form conclusions based on what they observe and their medical knowledge.

MediX-R1 bridges this gap by providing contextual, free-form responses that better match how clinicians actually work. The model can analyze medical images across 16 different modalities and provide reasoned explanations for its conclusions.

The efficiency gains are significant too. Traditional medical AI requires expensive human annotation from medical experts. The reinforcement learning approach reduces this dependency, potentially making high-quality medical AI more accessible in resource-constrained settings.

The context

Generative AI in healthcare is projected to become a $21.7 billion market by 2032, driven largely by demand for AI assistants supporting clinicians and healthcare operations. Medical AI models are already being deployed for clinical questions, triage support, and report drafting.

But healthcare sets an exceptionally low tolerance for error. This makes model training resource-intensive and technically demanding. Most existing approaches remain stuck on multiple-choice formats that don't reflect real clinical reasoning.

MediX-R1 is released as a research prototype under open-source licensing, with all model weights, training code, and datasets publicly available. The researchers stress it's not ready for clinical deployment and note risks including potential hallucination of medical findings. Further evaluation with clinicians is needed before any real-world use.

The project received support from an NVIDIA Academic Grant and an MBZUAI-IITD Research Collaboration Seed Grant, highlighting growing investment in medical AI research across the Middle East and Asia.

💡Did you know?

You can take your DHArab experience to the next level with our Premium Membership.👉 Click here to learn more

🛠️Featured tool

Easy-Peasy

Easy-Peasy

An all-in-one AI tool offering the ability to build no-code AI Bots, create articles & social media posts, convert text into natural speech in 40+ languages, create and edit images, generate videos, and more.

👉 Click here to learn more